There are hundreds of thousands of AI agents running in production today that can send emails, move money, modify databases, and talk to customers — without a single policy governing what they're allowed to do. No audit trail. No kill switch. No way to prove, after the fact, what happened and why.

We know this because we built a lot of them.

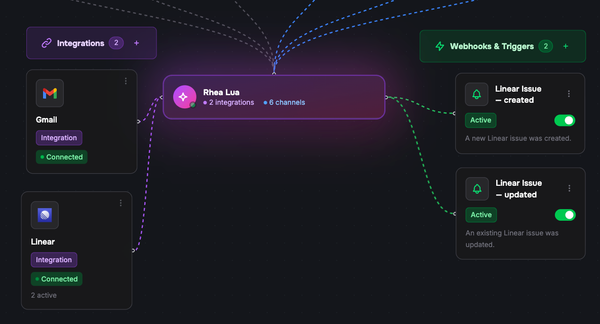

Lua AI has deployed 5,000+ agents across 160 businesses in 10 countries. Sales agents that negotiate deals. Support agents that process refunds. Booking agents that commit calendar slots and take payments. These aren't chatbots — they take real actions with real consequences. And for the last two years, the industry's answer to "how do we govern this?" has been: we don't.

Today we're open-sourcing the tool we built to fix that.

This is Lua's first open-source release. Until now, everything we've built — the agent runtime, the deployment platform, the orchestration layer — has been proprietary. We're choosing to make governance the first thing we open up because we believe this layer is too important to be closed. The infrastructure that decides what AI agents can and cannot do in production needs to be transparent, auditable, and community-owned. If we're asking teams to trust governance tooling with their most sensitive agent operations, the least we can do is let them read every line of it.

The Gap Nobody's Filling

Every major AI agent framework gives you powerful primitives for building agents. They let you wire up tools, manage context, orchestrate multi-step workflows. They are genuinely impressive pieces of engineering.

None of them answer a basic question: what is this agent allowed to do?

Not "what can it do" — what is it allowed to do? Which tools can it call? Under what conditions? Who approved it? What happens when it goes wrong? Where's the proof?

This isn't a hypothetical concern. An agent with access to a shell will eventually execute commands it shouldn't. An agent with access to a payment API will eventually process a transaction it shouldn't. An agent with access to customer data will eventually surface information it shouldn't. The question isn't if — it's whether you'll know when it happens.

Guardrails exist, but they solve a different problem. Content filters and prompt guards sit between the user and the model. They govern what goes in and what comes out. They don't govern what the agent does — the tool calls, the API requests, the database mutations that happen in the execution layer. That's where the actual risk lives.

What We Built

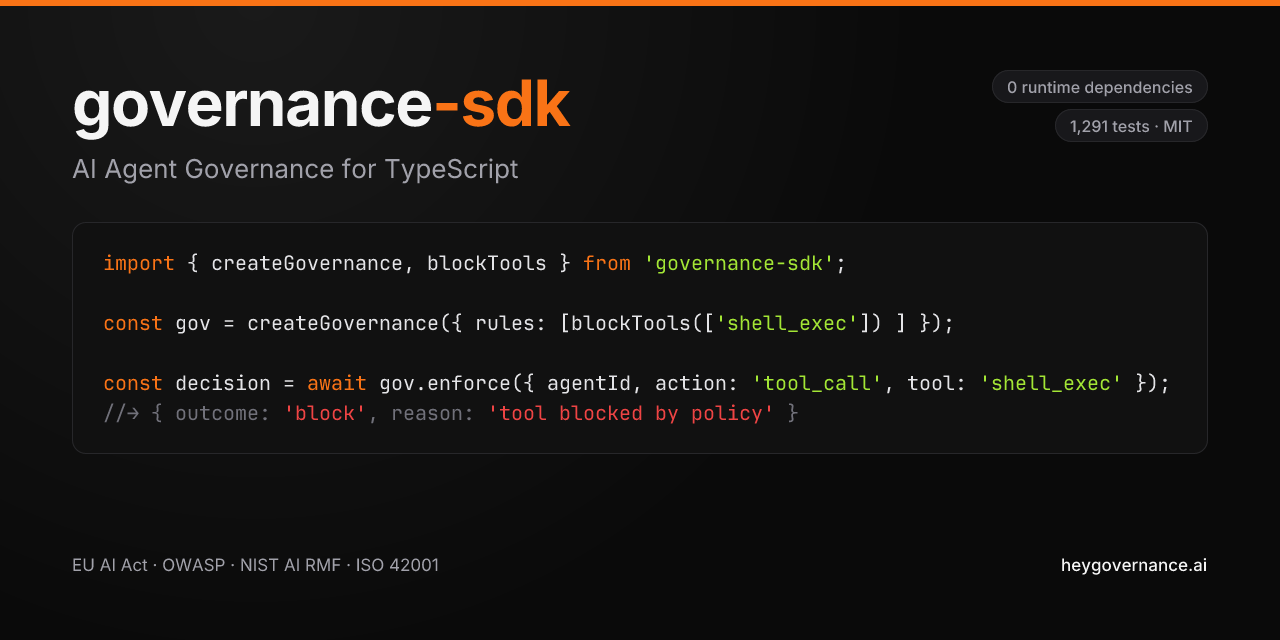

governance-sdk is a zero-dependency TypeScript SDK that enforces policy before every agent action executes. Three things make it real governance, not just monitoring:

Interception, not observation. The SDK sits between the agent and the tool call — before it fires, not after. You can't govern what you can't intercept. And critically, governance never crashes your agent. If the policy engine encounters an error, it fails open by default. Your agent keeps running. The governance layer is additive safety, not a new point of failure.

Identity that means something. The SDK knows which agent is attempting which action, at what trust level, in what context. Trust levels aren't static configuration — they adjust based on how the agent actually behaves in production. An agent that consistently triggers policy blocks or injection alerts sees its trust level degrade automatically. An agent with clean audit history earns more autonomy. This is the difference between governance-as-config and governance-as-signal.

Decisions, not logs. Block. Approve. Require human sign-off. Mask sensitive data in the response. These are real-time decisions made before the action executes, not log entries you review after the damage is done. When an action requires human approval, the SDK manages the entire pause-and-resume flow — the agent waits for authorisation, and proceeds or stops based on the outcome.

Why Open Source, Why Now

Two reasons. One principled, one pragmatic.

The principled reason: governance infrastructure shouldn't be proprietary. If the industry is going to trust that AI agents are operating within bounds, the enforcement layer needs to be inspectable. You should be able to read every line of code that decides whether your agent's action goes through or gets blocked. MIT license. Zero dependencies. No black boxes.

The pragmatic reason: the EU AI Act's high-risk system requirements take effect on August 2, 2026. That's less than four months away. Article 9 requires risk management. Article 12 requires record-keeping. Article 14 requires human oversight with the ability to interrupt. Article 15 requires accuracy and robustness measures. If you're deploying AI agents that make decisions in employment, credit, education, healthcare, or law enforcement contexts, these aren't suggestions. They're law. With penalties up to €35 million or 7% of global revenue.

Most organisations haven't started. Only 8 of 27 EU member states have even designated enforcement authorities. Harmonised standards are delayed. But the deadline, as of today, still stands.

The SDK maps your governance posture against the EU AI Act, NIST AI RMF, ISO 42001, and the OWASP Top 10 for Agentic AI. These are cross-reference tools, not certifications, not legal advice — but they show you exactly where you stand and what's missing.

What Honest Looks Like

We want to be direct about what this is and what it isn't, because we think the AI tooling ecosystem has a credibility problem. Too many projects claim capabilities they don't have.

The injection detection system uses pattern matching across 7 attack categories with normalisation for encoding evasion techniques. It scores F1=0.48 on our published benchmark of 6,931 labelled samples. That's useful as defence-in-depth — catching known attack patterns before they reach your agent. It's not a substitute for a dedicated ML classifier, and we don't pretend it is. The SDK exposes an interface so you can plug one in when your threat model demands it.

The audit trail is tamper-evident by design — HMAC-SHA256 hash-chained, so modifying any event breaks the chain in a mathematically provable way. But it's opt-in. The core enforcement path is optimised for speed: millisecond policy evaluation, with audit writes happening asynchronously so governance never adds latency to your agent's responses.

The kill switch stops an agent immediately at the process level. If you need fleet-wide emergency halt across distributed infrastructure, that's a centralised state problem — exactly the kind of thing our hosted service handles.

We publish these limits because honesty scales better than hype. You can read the benchmarks, inspect the source, and decide whether it fits your use case. That's the point of open source.

The Question for Security Leaders

If you're a CISO or Head of AI evaluating agent deployments, here's what should concern you: can you prove what your agents did last Tuesday?

Not what they were configured to do. Not what the prompt said. What they actually did. Every tool call. Every decision. Every piece of data they accessed. With cryptographic proof that the record hasn't been altered.

If the answer is no, you have an audit gap that no amount of prompt engineering will close. Agents aren't deterministic systems — they make probabilistic decisions that lead to real-world actions. Governing them requires the same rigour you'd apply to any system that moves money, accesses PII, or makes decisions that affect people's lives.

The good news: this is a solved problem. Policy enforcement, tamper-evident audit trails, and human oversight kill switches are well-understood patterns from decades of systems engineering. They've just never been applied to AI agents in production.

The Question for Product Leaders

If you're a CPO or Head of AI building with agents, here's a different question: how fast can your team ship agents that your compliance team will approve?

Governance isn't just a security concern — it's a velocity concern. The teams that embed governance from day one ship faster than the teams that bolt it on later, because they never have to stop and retrofit. They never have to explain to legal why there's no audit trail. They never have to tell a client they can't prove what happened.

The SDK works with the frameworks your team is already using. Mastra, Vercel AI SDK, OpenAI Agents, LangChain, Anthropic, and more — ten adapters covering the major TypeScript agent ecosystem. Adding governance is a single import, not an architecture change.

Getting Started

npm install governance-sdk

The full source is on GitHub. The documentation is at heygovernance.ai. MIT licensed, zero dependencies, and it stays that way.

For teams that need distributed enforcement, ML-powered injection detection, fleet-wide kill switches, approval workflows, and a real-time governance dashboard — that's Lua Governance Cloud. The open-source SDK is the foundation. The cloud is where it scales.

What Comes Next

Open-sourcing governance-sdk is step one. We're building towards a world where every AI agent in production is governed, auditable, and accountable — not because a regulation demanded it, but because it's the only responsible way to deploy software that makes decisions on behalf of people.

The teams shipping AI agents today are building the infrastructure that will power the next decade of business. That infrastructure deserves governance that's as rigorous as the agents themselves.

We'd rather build it in the open.

governance-sdk is MIT licensed, zero-dependency, and available today on GitHub · npm · Docs